Understanding AI Data Privacy Risks: A Simple Guide for Businesses and Users

AI data privacy enforcement is hitting businesses where it hurts. Italy fined OpenAI €15 million for GDPR violations in late 2024, marking the first major generative AI penalty. Meanwhile, Stanford’s 2025 AI Index Report revealed a staggering 56.4% increase in AI-related incidents, with 233 reported cases throughout 2024 alone. These aren’t just statistics – they represent real fines, legal battles, and damaged trust that businesses can’t afford to ignore.

What Makes AI Data Privacy So Different?

Traditional data systems collect what they need and use it for specified purposes. AI systems operate differently. They consume massive datasets – sometimes billions of images like Clearview AI’s facial recognition database – and generate insights or decisions that users never explicitly consented to. Unlike a simple customer database, AI can infer sensitive details about health, relationships, or behavior from seemingly harmless information.

The core challenge lies in AI’s “black box” nature. When an AI system denies someone credit or flags them for security screening, explaining exactly how that decision was made becomes nearly impossible. This opacity directly conflicts with privacy laws like GDPR that demand transparency and user control over automated decisions.

Privacy regulations weren’t designed for systems that learn and evolve. GDPR’s data minimization principle requires collecting only necessary information, but AI models typically perform better with more data. This fundamental tension creates compliance headaches for businesses trying to innovate responsibly.

The Biggest AI Data Privacy Risks Today

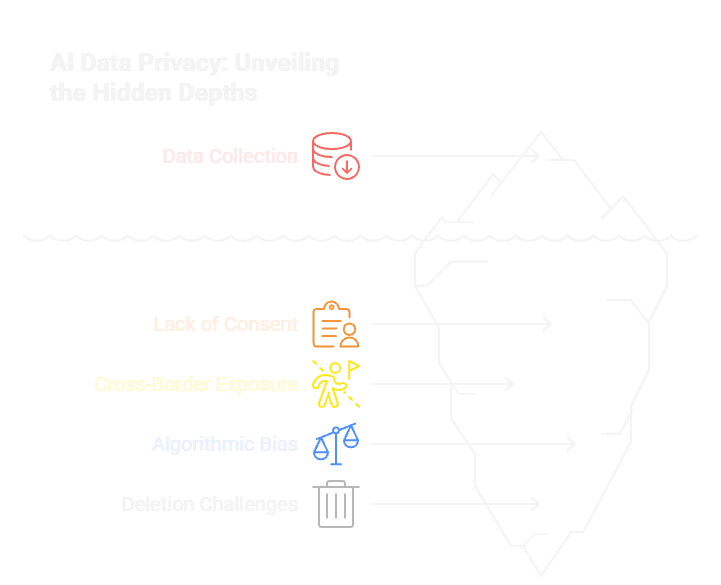

Unauthorized Data Collection and Training :

AI companies scrape public websites, social media, and databases to train models, often without user knowledge or consent. Clearview AI collected over 50 billion facial images from public sources, resulting in multiple GDPR fines totaling over €90 million across Europe.

Lack of Meaningful Consent

Traditional cookie banners and privacy policies don’t cover AI’s complex data uses. Users might agree to “data processing” without understanding their conversations will train chatbots or their images will improve facial recognition systems.

Cross-Border Data Exposure

AI systems often process data across multiple countries and cloud providers, creating jurisdictional nightmares. Companies struggle to track where personal data flows, especially when training happens on distributed systems.

Algorithmic Bias and Discrimination

AI models can perpetuate or amplify biases in training data, leading to unfair treatment of protected groups. This creates both privacy violations and potential civil rights issues.

Data Persistence and Deletion Challenges

Once personal data trains an AI model, removing that information becomes technically complex or impossible. Even if companies delete source files, the “learned” patterns remain embedded in model parameters.

Real-World Impact: Recent Enforcement Cases

Italy’s privacy authority has emerged as an aggressive AI enforcer, fining Luka Inc €5 million for its Replika AI companion and OpenAI €15 million for ChatGPT violations in 2024 alone. The Netherlands imposed a €30.5 million penalty on Clearview AI, with an additional €5.1 million threatened for continued non-compliance. [Complete Story]

These cases share common themes: lack of legal basis for data processing, insufficient transparency about AI training, and failure to implement proper consent mechanisms.

How Businesses Can Protect Themselves

Conduct AI-Specific Privacy Impact Assessments

Standard privacy reviews aren’t enough. Map exactly what data your AI systems collect, how they process it, and what inferences they generate. Document these flows and update assessments as models evolve.

Implement Granular Consent Systems

Move beyond blanket permissions to specific, informed choices. Let users consent to AI training separately from basic service use. Provide clear explanations of how their data improves AI capabilities.

Adopt Privacy-Preserving AI Techniques

Explore federated learning, differential privacy, and synthetic data generation to reduce dependence on personal information. These approaches can maintain AI performance while minimizing privacy risks.

Establish AI Governance Frameworks

Create cross-functional teams including legal, privacy, and technical experts to oversee AI deployments. Develop internal policies for AI data use and regular compliance reviews.

Monitor and Audit Continuously

AI systems evolve through training and updates. Implement ongoing monitoring for privacy compliance, bias detection, and unexpected data exposures. Document all changes for regulatory purposes.

What Users Should Know and Demand

Understand Your AI Data Rights

Under GDPR and similar laws, you can request access to data used for AI training, object to automated profiling, and demand corrections to inaccurate AI-generated information. Exercise these rights actively.

Look Beyond Cookie Banners

Read privacy policies specifically for AI and machine learning sections. Look for explanations of how your data trains models and what choices you have about that use.

Stay Alert for AI Processing

any services use AI behind the scenes without obvious disclosure. Watch for personalized recommendations, automated content moderation, or risk scoring that might indicate AI processing of your data.

The Road Ahead: Regulatory Trends

AI-specific regulations are accelerating globally. The EU AI Act creates risk-based compliance requirements, while U.S. states develop their own AI privacy laws. Expect stricter transparency requirements, mandatory AI impact assessments, and higher penalties for violations.

Organizations that wait for perfect regulatory clarity will fall behind. The enforcement trend shows privacy authorities are applying existing laws aggressively while new AI-specific rules develop

Taking Action Now

AI data privacy is not a future concern – it’s a current compliance requirement with real financial consequences. Businesses must audit their AI systems today, implement proper consent mechanisms, and establish governance frameworks before facing their own €15 million fine.

Users should exercise their privacy rights actively and demand transparency from AI services they use. When you spot potential violations, report them to privacy authorities or platforms like ConsentWatch that help make privacy violations visible.

The balance between AI innovation and privacy protection requires ongoing effort from everyone. Stay informed about new developments, implement robust safeguards, and remember that responsible AI deployment builds trust that benefits both businesses and users in the long run.

Ready to Strengthen Your AI Privacy Strategy?

Don’t wait for your organization to become the next headline-grabbing fine. ConsentWatch offers expert guidance, compliance audits, and privacy assessments tailored specifically for AI-driven businesses. Whether you need help implementing granular consent systems, conducting AI-specific privacy impact assessments, or staying current with rapidly evolving regulations, our team has the expertise to keep you compliant and competitive.